What the Agent Noticed

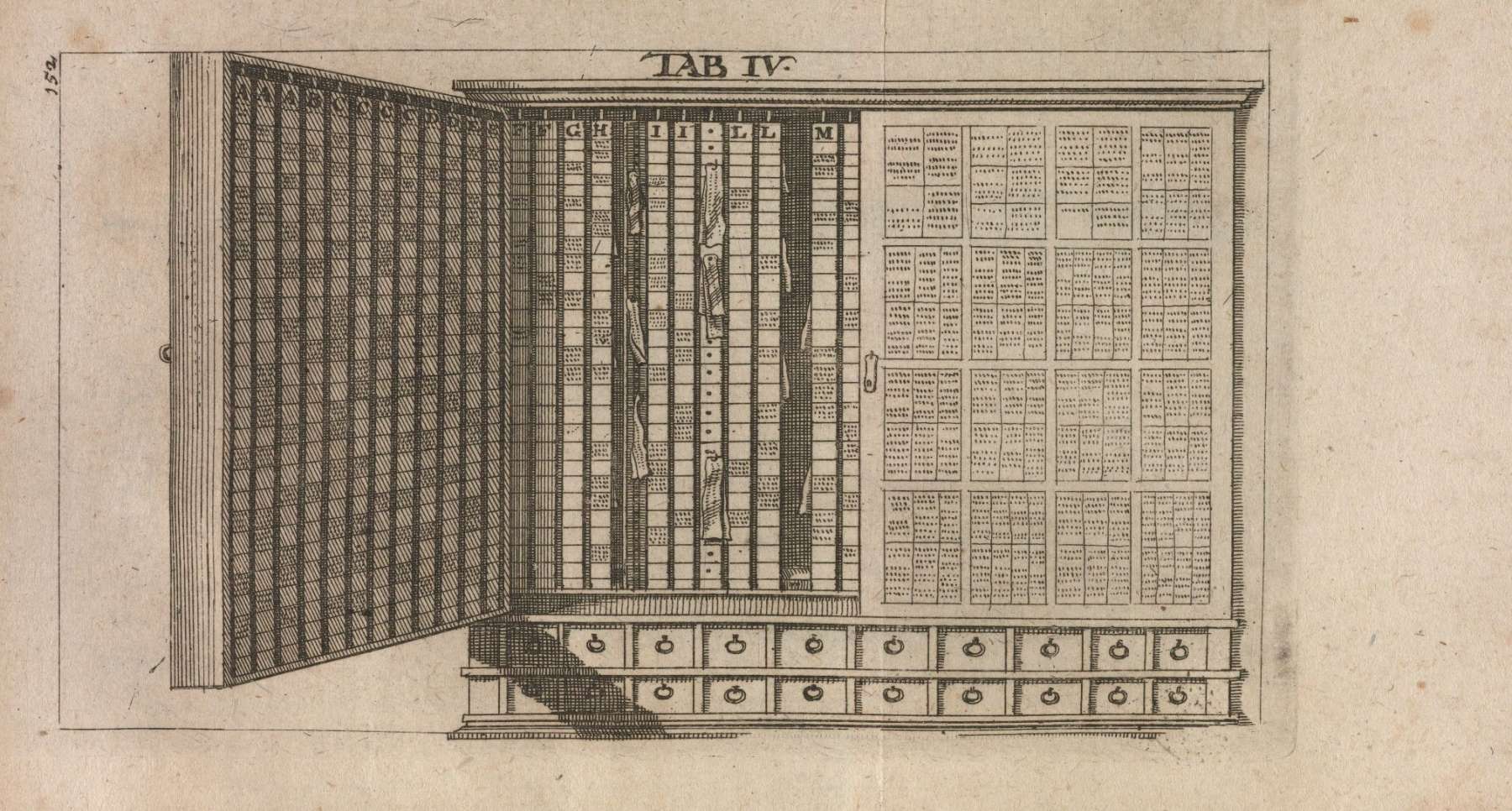

Illustration from De arte excerpendi by Vincent Placcius

Summary

Typed links, an agent to tend them, and the part of note-taking I was never going to do myself: https://github.com/jamesfishwick/slipbox-mcp

A few weeks ago, Claude proposed a link between two notes I'd never have crossed in my head.

The first was a January note on Liz Fong-Jones reframing what AI-assisted development actually is: "a language model changes you from a programmer who writes lines of code, to a programmer that manages the context the model has access to, prunes irrelevant things, adds useful material." I'd filed it under ai-coding, context-management, developer-experience.

The second was a February note on Jackson Mac Low—an avant-garde poet who, in the 1990s, took the output of a computer-poetry program and reworked it by hand: changed word order, added articles, inserted punctuation, and restructured lines into prime-number sequences. Mac Low called the algorithm's output raw material; he was the one who made it a poem. I'd filed that note under jackson-mac-low, creative-process, algorithmic-constraint.

Written seven weeks apart. Zero shared tags. Zero shared references. One about AI-assisted coding in 2026, one about experimental poetry in 1994.

But both said the same thing: when the algorithm does the generative work, the human's job is curation—pruning, ordering, and deciding what counts. Fong-Jones called it context curation. Mac Low called it editorial curation. Claude proposed a supports edge between the two, with a one-sentence rationale.

I opened the Mac Low note to review the proposal and near the end found a line from February I had no memory of writing:

"This model anticipates current AI-human creative partnerships: algorithm as generative constraint, human as editorial curator and aesthetic arbiter."

A poet working in 1994 had, in effect, described the epistemology of agentic knowledge work three decades before I built a tool to argue about it. My past self wrote the thesis of this essay and filed it under poetry.

This is a post about the tool that noticed and about the part of note-taking I'd been quietly failing at for years.

The graveyard

I've used Evernote, Notion, Roam, Obsidian. Read the books. Understood the method. I was good at the capture part and bad at the connection part, and eventually I stopped pretending the connection part was going to happen. Five hundred and fifty notes in, the slipbox wasn't a thinking tool—it was a dusty attic. Notes went in to die.

Finding connections across 550 notes is a working-memory problem I don't have the bandwidth to solve. When I wrote a new note, I'd link the two or three obvious neighbors. The other ten were notes I'd written six months earlier and no longer remembered existed. That's not a discipline problem. It's more the job being the wrong size for a modern human outside of pure academia.

What changed: typed operations for an agent

When Claude Desktop shipped the Model Context Protocol (tools an AI can call to read, write, and reason over your data directly), I stopped thinking about note-taking as software I use and started thinking about it as software an agent uses. That's the shift: agentic AI isn't autocomplete or chat. It's AI with tools to act on your behalf—create, link, search, and analyze—rather than just suggest.

So I built a slipbox for the agent, not for me. The MCP server exposes a small typed vocabulary — create_note, create_link, find_similar_notes, analyze_note, find_clusters—and Claude runs the infrastructure while I do the thinking. I capture, write, and decide what matters. Claude searches the whole graph, proposes typed links, detects emergent clusters, and writes structure notes when a topic crosses a threshold. I approve.

The felt difference after a year of using it: I stopped missing the ten connections. Not because I got better at remembering 550 notes but because the job is no longer mine.

Typed links, not backlinks

Two weeks ago, Andrej Karpathy described a version of this publicly1: ingest raw documents, compile them into a markdown wiki with backlinks and categorization, run health checks to find inconsistencies and propose new connections. He closed the thread:

"I think there is room here for an incredible new product instead of a hacky collection of scripts."

Karpathy's wiki has backlinks: X is linked to Y. This tool has typed links: X refines Y, X contradicts Y, X supports Y. The verb is the whole point.

A backlink tells you two notes share a reference. A contradicts edge lets you ask "what in my slipbox disagrees with this claim?" a question a backlink graph cannot express. Vector similarity has the same limit in the other direction: it tells you what's close, not what's related, how. Similarity isn't relevance, and closeness isn't meaning.

Seven link types—extends, contradicts, supports, questions, refines, related, reference—is a small enough vocabulary to stay legible and a rich enough one to carry the shape of actual thought. When the agent proposes a refines link between a Mac Low note and a DIASTEX5 note, it isn't saying "these co-occur." It's making a claim about how one idea acts on the other. That claim is either useful or wrong, and either way it's a claim I can audit.

The division of labor

The lesson after a year: give the agent typed operations, let it run the infrastructure, and keep the human on the thinking.

But raw file access isn't enough; the agent needs a vocabulary shaped like the work: explicit note types, explicit link types, search that understands semantic proximity, and cluster detection that surfaces themes before I've formalized them. Once that vocabulary exists, the division of labor falls out on its own. I capture, write, judge. Claude searches, proposes, connects, maintains.

Which is why the tool is deliberately boring. No fancy UI. No real-time sync. No collaboration features. Note storage, typed operations, batch analysis. That's enough when the agent runs the rest.

What it is

Python! An MCP server that exposes 19 tools and 6 workflow prompts. SQLite with FTS5 for BM25-ranked full-text search across 550+ notes. Seven typed link types. Cluster analysis on graph structure and tag co-occurrence. Notes are flat markdown with YAML frontmatter — the database is an index, not the source of truth, and you can delete and rebuild it from files anytime. Works with any MCP client (Claude Desktop, Claude Code, OpenCode, Copilot, anything else that speaks MCP) and alongside any markdown editor (Obsidian, Foam, Logseq) for visual editing. Open source, MIT licensed.

Code and full roadmap: github.com/jamesfishwick/slipbox-mcp. Setup takes about ten minutes. It's early-stage software—no automated schema migrations yet, macOS-focused automation scripts, and the search index can drift if you edit in Obsidian while the server is running. Back up your notes before you experiment. The README covers the caveats in full.

If you try it, tell me what you discover, what breaks, and what you actually need versus what sounded useful on paper.

What Mac Low already knew

What Mac Low worked out three decades ago with DIASTEX5— a 1994 computer-poetry program — wasn't a metaphor for any of this. It was the prior art. A poet had already described the shape of the partnership: algorithm as generative constraint, human as editorial curator and aesthetic arbiter. The program produced raw material a human wouldn't have written unaided. Mac Low did the part only a poet could do: decide what was worth keeping, what belonged where, what the poem meant.

Fong-Jones described the same division in programming terms, seven weeks before I wrote the Mac Low note. I didn't see the connection until Claude put the two notes next to each other. What's new in 2026 isn't the division of labor—it's that the algorithm can now reach back into your own archive and tell you this supports that, across domains you'd filed in different rooms. DIASTEX5 generated forward. The agent curates backward, across the things you already wrote and forgot.

My past self described all of this in February and filed it under poetry. It took the agent to find it. And it's taken me this whole essay to admit that Mac Low got there first.

-

Andrej Karpathy on X, April 2, 2026. ↩